MuSA_RT animates a visual representation of tonal patterns – pitches, chords, key – in music as it is being performed.

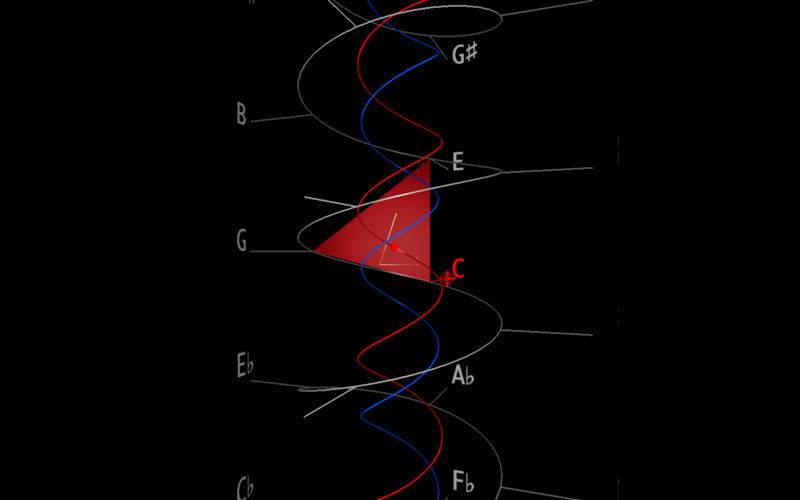

MuSA_RT applies music analysis algorithms rooted in Elaine Chew’s Spiral Array model of tonality, which also provides the 3D geometry for the visualization space.

MuSA_RT interprets MIDI information received from any coreMIDI source to determine pitch names, maintain shortterm and longterm tonal context trackers, each a Center of Effect (CE), and compute the closest triads (3-note chords) and keys as the music unfolds in performance.

MuSA_RT presents a graphical representations of these tonal entities in context, smoothly rotating the virtual camera to provide an unobstructed view of the current information.

MuSA_RT 1.0 was commissioned by the MuCoaCo Lab as part of the Music on the Spiral Array . Real Time (MuSA.RT) project, and as companion software to the forthcoming Springer ORMS Series book “Mathematical and Computational Modeling of Tonality: Theory and Applications” by Elaine Chew, expected end of 2012.

The development of MuSA_RT 1.0 was supported in part by the United States National Science Foundation grant no. 0347988.